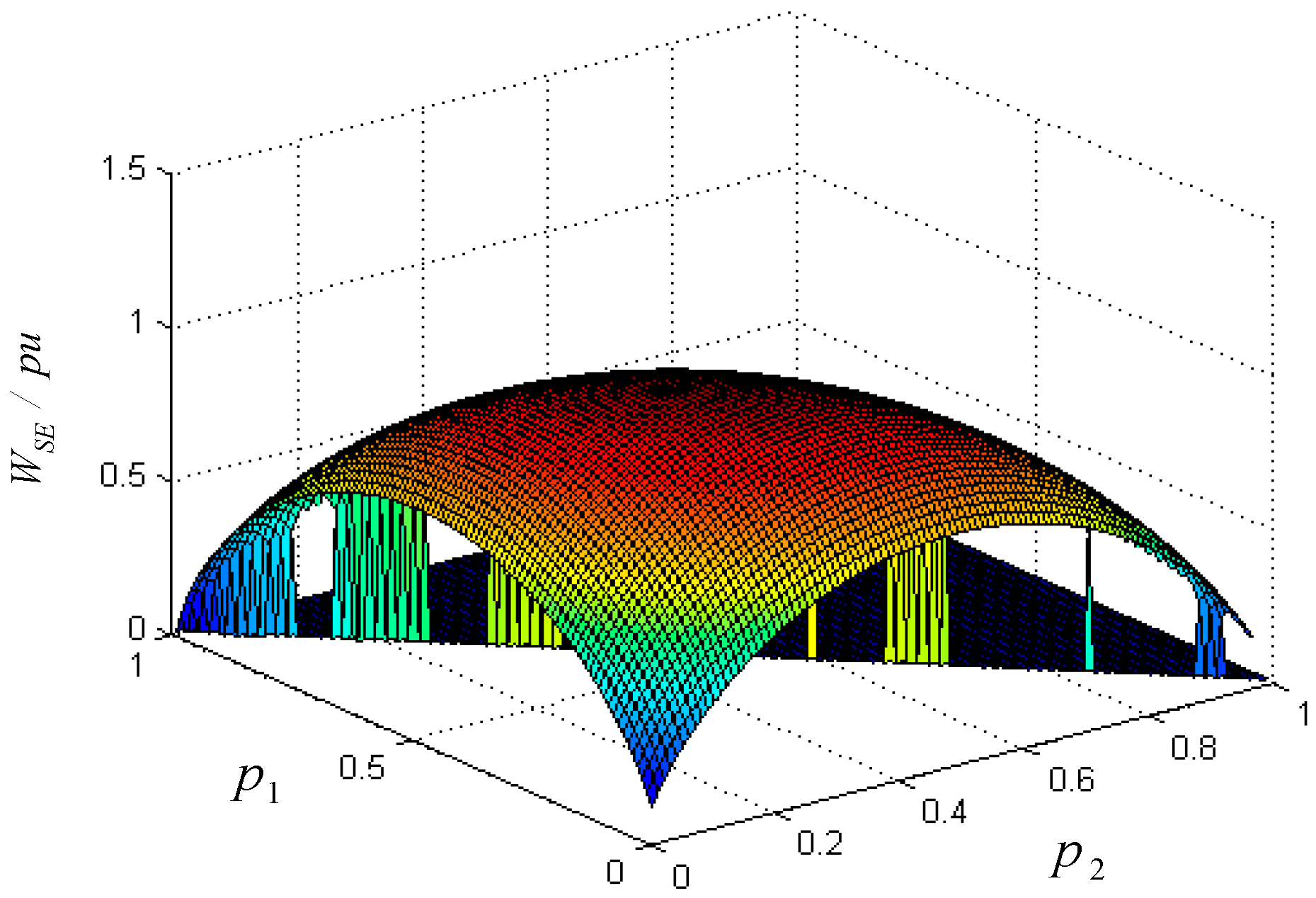

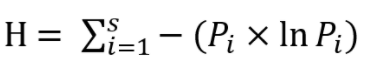

H(x, y)=H(x)+H(y) only when x and y are independent events. The joint entropy of two events is less than or equal the sum of the individual entropies.Entropy H is maximized when the p_i values are equal.(Uncertainty vanishes only when we are certain about the outcomes.) Entropy H is 0 if and only if exactly one event has probability 1 and the rest have probability 0.Shannon observes that H has many other interesting properties:

He named this measure of uncertainty entropy, because the form of H bears striking similarity to that of Gibbs Entropy in statistical thermodynamics.

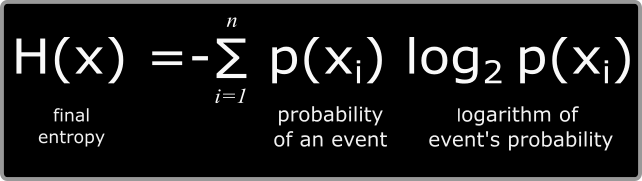

However, the independence property tells us that this relationship should hold: If the second flip is heads, x=1, if tails x=2. If the flip was tails, flip the coin again. Supposed we generate a random variable x by the following process: Flip a fair coin. Second, If each event is equally likely ( p_i=1/n), H should increase as a function of n: the more events there are, the more uncertain we are.įinally, entropy should be recursive with respect to independent events. A small change in a single probability should result in a similarly small change in the entropy (uncertainty). He thought that "it is reasonable" that H should have three properties:įirst, H should be a continuous function of each p_i. , p_n) describing the uncertainty of an arbitrary set of discrete events (i.e. In general, Shannon wanted to devise a function H(p_1, p_2. We might want to say the uncertainty in this case is 1. However, if the coin is fair and p=0.5, we maximize our uncertainty: it's a complete tossup whether the coin is heads or tails. Since there is no uncertainty, we might want to say the uncertainty is 0. Claude Shannon asked the questionĬan we find a measure of how much "choice" is involved in the selection of the event or of how uncertain we are of the outcome?įor example, supposed we have coin that lands on heads with probability p and tails with probability 1-p. Let’s go to see in action.Supposed we have a discrete set of possible events 1,\ldots, n that occur with probabilities (p_1, p_2. Import argparse # XOR function to encrypt dataĭef xor ( data, key ): key = str ( key ) l = len ( key ) output_str = "" for i in range ( len ( data )): current = data current_key = key ordd = lambda x : x if isinstance ( x, int ) else ord ( x ) output_str += chr ( ordd ( current ) ^ ord ( current_key )) return output_str # encryptingĭef xor_encrypt ( data, key ): ciphertext = xor ( data, key ) ciphertext_str = ' demo 3 In the Python language it is looks like this: represents the proportion of each unique character in the input. This is the most important part of the equation, because this is what assigns higher numbers to rarer events and lower numbers to common events. The right hand of formula represents a summation that sums up: Is a well known identity of the logarithm. So how do you calculate Shannon’s entropy? So, the greater the entropy, the more likely the data is obfuscated or encrypted, and the more probable the file is malicious. But at the same time as author is compressing the data as well as inserting some harmful code in the original file author is lowering the unpredictability of data therefore raising the entropy and here we can catch the file based on the entropy value. As you know from my previous posts, it’s usually something like payload encryption or function call obfuscation. Malware authors are clever and advance and they do many tactics and tricks to hide malware from AV engines. Now let me unfold a relationship between malwares and entropy. The Shannon entropy is named after the famous mathematician Shannon Claude. Entropy is a measure of the unpredictability of the file’s data. Simply said, Shannon entropy is the quantity of information included inside a message, in communication terminology. How to use it for malware analysis in practice. This post is the result of my own research on Shannon entropy. Hello, cybersecurity enthusiasts and white hackers!

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed